Education report calling for ethical AI use contains over 15 fake sources

Experts find fake sources in Canadian government report that took 18 months to complete.

Experts find fake sources in Canadian government report that took 18 months to complete.

A $100 replica Switch dock or $25 cardboard sleeve is needed to play the 3D re-releases.

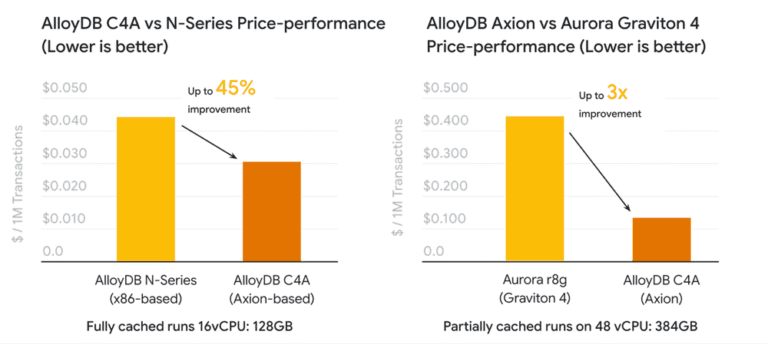

At Google Cloud Next ’25, we announced the preview of AlloyDB on C4A virtual machines, powered by Google Axion processors, our custom Arm-based CPUs. Today, we’re glad to announce that C4A virtual machines are generally available! For transactional workloads, leveraging C4A, AlloyDB provides nearly 50% better price-performance compared to N series machines for transactional workloads, and up to 2x better throughput performance compared to Amazon’s Graviton4-based offerings, bringing substantial efficiency gains to your database operations. Let’s dive into the benefits of running high-performance databases and analytics engines on C4A. Meeting the demands of modern data workloads Business-critical applications require infrastructure that delivers high performance, cost-efficiency, high availability, robust security, scalability, and strong data protection. However, the relentless growth of data volumes and increasingly complex processing requirements, coupled with slowing traditional CPU advancements, can present significant hurdles. Furthermore, the rise of sophisticated analytics and vector search for generative AI introduces new challenges to maintaining performance and efficiency. These trends necessitate greater computing power, but existing CPU architectures don't always align perfectly with database operational needs, potentially leading to performance bottlenecks and inefficiencies. C4A virtual machines are engineered to address these challenges head-on, bringing compelling advantages to your managed Google Cloud database workloads. They deliver significantly improved price-performance compared to N-series instances, translating directly into more cost-effective database operations. Purpose-built to handle data-intensive workloads that require real-time processing, C4A instances are well-suited for high-performance databases and demanding analytics engines. Integrating Axion-based C4A VMs for AlloyDB underscores our commitment to adding this technology across our database portfolio, for a diverse range of database deployments. AlloyDB on Axion: Pushing performance boundaries AlloyDB, our PostgreSQL-compatible database service designed for the most demanding enterprise workloads, sees remarkable gains when running on Axion C4A instances, delivering: 3 million transactions per minute up to 45% better price-performance than N series VMs for transactional workloads up to 2x higher throughput and 3x better price-performance than Amazon Aurora PostgreSQL running on Graviton4 Gigaom independently validated performance and price-performance between AlloyDB (C4A) and Amazon Aurora for PostgreSQL, and published a detailed performance evaluation report. AlloyDB on Axion supports vCPUs from 1vCPU up to 72vCPUs. It also includes a frequently requested intermediate 48 vCPU shape between 32 and 64vCPUs. In particular, AlloyDB added support for 1 vCPU shape with 8GB of memory with C4A, a cost-effective option for development and sandbox environments. This new shape lowers the entry cost for AlloyDB by 50% compared to the earlier 2vCPU option. The 1 vCPU shape is intended for smaller databases, typically in the tens of gigabytes, and does not come with uptime SLAs, even when configured for high availability. Availability and getting started Already, hundreds of customers have adopted C4A machine types, citing increased performance for their applications and reduced costs. They also love the fact that they can lower their costs by 50% using 1vCPU, fueling widespread adoption.. And now that C4A machine types are generally available, customers are excited to leverage them for their production workloads. Axion-based C4A machines for AlloyDB are now generally available in select Google Cloud regions. To deploy a new C4A machine for your new instance, or to upgrade your existing instance to C4A, simply choose C4A from the drop-down. For more technical details, please consult the documentation pages for AlloyDB. For detailed pricing information, please refer to the AlloyDB pricing page. C4A vCPU and memory are priced the same as N2 virtual machines and provide better price-performance. For more information about Google Axion, please refer to Google Axion Processors. Ready to get started? If you’re new to Google Cloud, sign up for an account via the AlloyDB free trial link. If you're already a Google Cloud user, head over to the AlloyDB console. Once you’re in the console, click "Create a trial cluster" and we'll guide you through migrating your PostgreSQL data to a new AlloyDB database instance

The AI web search company Perplexity is being hit by another lawsuit alleging copyright and trademark infringement, this time from Encyclopedia Britannica and Merriam-Webster. Britannica, the centuries-old publisher that owns Merriam-Webster, sued Perplexity in New York federal court on September 10th. In the lawsuit, the companies allege that Perplexity’s “answer engine” scrapes their websites, steals […]

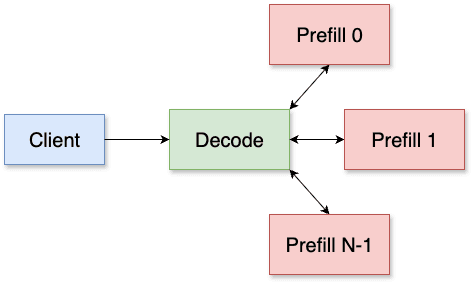

Key takeaways: PyTorch and vLLM have been organically integrated to accelerate cutting-edge generative AI applications, such as inference, post-training and agentic systems. Prefill/Decode Disaggregation is a crucial technique for enhancing...

Why do we still wrestle with documents in 2025? Spend some time in any data-driven organisation, and you’ll encounter a host of PDFs, Word files, PowerPoints, half-scanned images, handwritten notes, and the occasional surprise CSV lurking in a SharePoint folder. Business and data analysts waste hours converting, splitting, and cajoling those formats into something their Python […] The post Docling: The Document Alchemist appeared first on Towards Data Science.

Editor’s note: Rent the Runway is redefining how consumers engage with fashion, offering on-demand access to designer clothing through a unique blend of e-commerce and reverse logistics. As customer expectations around speed, personalization, and reliability continue to rise, Rent the Runway turned to Google Cloud’s fully managed database services to modernize its data infrastructure. By migrating from a complex, self-managed MySQL environment to Cloud SQL, the company saved more than $180,000 annually in operational overhead. With Cloud SQL now supporting real-time inventory decisions, Rent the Runway has built a scalable foundation for its next chapter of growth and innovation. Closet in the cloud, clutter in the stack Rent the Runway gives customers a “Closet in the Cloud” — on-demand access to designer clothing without the need for ownership. We offer both a subscription-based model and one-time rentals, providing flexibility to serve a broad range of needs and lifestyles. We like to say Rent the Runway runs on two things: fashion and logistics. When a customer rents one of our items, it kicks off a chain of events most online retailers never have to think about. That complex e-commerce and reverse logistics model involves cleaning, repairing, and restocking garments between uses. To address both our operational complexity and the high expectations of our customers, we’re investing heavily in building modern, data-driven services that support every touchpoint. Out: Manual legacy processes Our legacy database setup couldn’t keep up with our goals. We were running self-managed MySQL, and the environment had grown… let’s say organically. Disaster recovery relied on custom scripts. Monitoring was patchy. Performance tuning and scaling were manual, time-consuming, and error-prone. Supporting it all required a dedicated DBA team and a third-party vendor providing 24/7 coverage — an expensive and brittle arrangement. Engineers didn’t have access to query performance metrics, which meant they were often flying blind during development and testing. Even small changes could feel risky. All that friction added up. As our engineering teams moved faster, the database dragged behind. We needed something with more scale and visibility, something a lot less hands-on. It’s called Cloud SQL, look it up When we started looking for alternatives, we weren’t trying to modernize our database: We were trying to modernize how our teams worked with data. We wanted to reduce operational load, yes, but also to give our engineers more control and fewer blockers when building new services. Cloud SQL checked all the boxes. It gave us the benefits of a managed service — automated backups, simplified disaster recovery, no more patching – while preserving compatibility with the MySQL stack our systems already relied on. But the real win was the developer experience. With built-in query insights and tight integration with Google Cloud, Cloud SQL made it easier for engineers to own what they built. It fit perfectly with where we were headed: faster cycles; infrastructure as code; and a platform that let us scale up and out, team by team. aside_block <ListValue: [StructValue([('title', 'Build smarter with Google Cloud databases!'), ('body', <wagtail.rich_text.RichText object at 0x3e10f3ad02b0>), ('btn_text', ''), ('href', ''), ('image', None)])]> Measure twice and cut over once We knew the migration had to be smooth because our platform didn’t exactly have quiet hours. (Fashion never sleeps, apparently.) So we approached it as a full-scale engineering project, with phases for everything from testing to dry runs. While we planned to use Google’s Database Migration Service across the board, one database required a manual workaround. That turned out to be a good thing, though: It pushed us to document and validate every step. We spent weeks running Cloud SQL in lower environments, stress-testing setups, refining our rollout plan, and simulating rollback scenarios. When it came time to cut over, the entire process fit within a tight three-hour window. One issue did come up – a configuration flag that affected performance — but Google’s support team jumped in fast, and we were able to fix it on the spot. Overall, minimal downtime and no surprises. Our kind of boring. Less queues and more runway One of the biggest changes we’ve noticed post-migration was how our teams worked. With our old setup, making a schema change meant routing through DBAs, coordinating with external support, then holding your breath during rollout. Now, engineers can breathe a little easier and own those changes end to end. Cloud SQL gives our teams access to IAM-controlled environments where they can safely test and deploy. That has let us move toward real CI/CD for database changes — no bottlenecks or surprises. With better visibility and guardrails, our teams are shipping higher-quality code and catching issues earlier in the lifecycle. Meanwhile, our DBAs can focus on strategic initiatives — things like automation and platform-wide improvements — rather than being stuck in a ticket queue. Our most cost-effective look yet Within weeks of moving to Cloud SQL, we were able to offboard our third-party MySQL support vendor, cutting over $180,000 in annual costs. Even better, our internal DBA team got time back to work on higher-value initiatives instead of handling every performance issue and schema request. Cloud SQL also gave us a clearer picture of how our systems were running. Engineers started identifying slow queries and fixing them proactively, something that used to be reactive and time-consuming. With tuning and observability included, we optimized instance sizing and reduced infrastructure spend, all without compromising performance. And with regional DR configurations now baked in, we’ve simplified our disaster recovery setup while improving resilience. In: Cloud SQL We’re building toward a platform where engineering teams can move fast without trading off safety or quality. That means more automation, more ownership, and fewer handoffs. With Cloud SQL, we’re aiming for a world where schema updates are rolled out as seamlessly as application code. This shift is technical, but it’s also cultural. And it’s a big part of how we’ll continue to scale the business and support our expansion into AI. The foundation is there. Now, we’re just dressing it up. Learn more: Discover how Cloud SQL can transform your business! Start a free trial today! Download this IDC report to learn how migrating to Cloud SQL can lower costs, boost agility, and speed up deployments. Learn how Ford and Yahoo gained high performance and cut costs by modernizing with Cloud SQL.

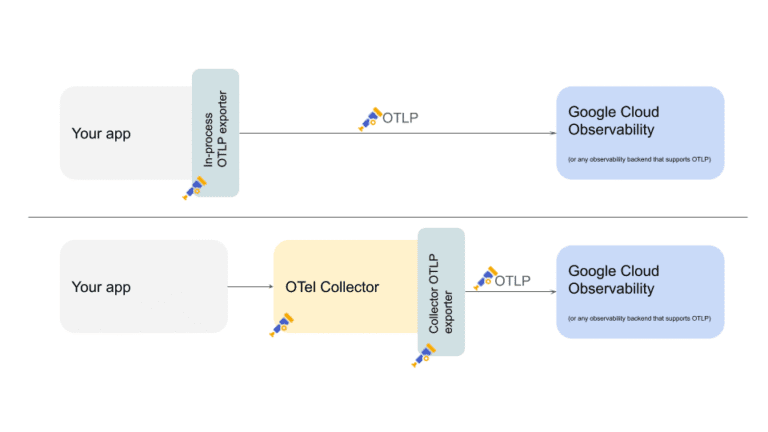

OpenTelemetry Protocol (OTLP) is a data exchange protocol designed to transport telemetry from a source to a destination in a vendor-agnostic fashion. Today, we’re pleased to announce that Cloud Trace, part of Google Cloud Observability, now supports users sending trace data using OTLP via telemetry.googleapis.com. Fig 1: Both in-process and collector based configurations can use native OTLP exporters to transmit telemetry data Using OTLP to send telemetry data to observability tooling with these benefits: Vendor-agnostic telemetry pipelines: Use native OTLP exporters from in-process or collectors. This eliminates the need to use vendor-specific exporters in your telemetry pipelines. Strong telemetry data integrity: Ensure your telemetry data preserves the OTel data model during transmission and storage and avoid transformations into proprietary formats. Interoperability with your choice of observability tooling: Easily send telemetry to one or more observability backends that support native OTLP without any additional OTel exporters Reduced client-side complexity and resource usage: Move your telemetry processing logic such as applying filters to the observability backend, reducing the need for custom rules and thus client-side processing overhead. Let’s take a quick look at how to use OTLP from Cloud Trace. Cloud Trace and OTLP in action Sending trace data using OTLP via telemetry.googleapis.com is now the recommended best practice for both new and existing users — especially for those who expect to send high volumes of trace data. Fig 2: Trace explore page in Cloud Trace highlighting fields that leverage OpenTelemetry semantic conventions The Trace explorer page makes extensive use of OpenTelemetry conventions to offer a rich user experience when filtering and finding traces of interest. For example, The OpenTelemetry convention service.name is used to indicate which services a span is originating from. The status of the span is indicated by the OpenTelemetry’s span status. Cloud Trace’s internal storage system now uses the OpenTelemetry data model natively for organizing and storing your trace data. The new storage system enables much higher limits when trace data is sent through telemetry.googleapis.com. Key changes include: Attribute sizes: Attribute keys can now be up to 512 bytes (from 128 bytes), and values up to 64 KiB (from 256 bytes). Span details: Span names can be up to 1024 bytes (from 128 bytes), and spans can have up to 1024 attributes (from 32). Event and link counts: Events per span increase to 256 (from 128), and links per span are now 128. We believe sending your trace data using OTLP will result in an better user experience in the trace explorer UI and Observability Analytics, along with the above storage limit increases. Google Cloud’s vision for OTLP Providing OTLP support for Cloud Trace is just the beginning. Our vision is to leverage OpenTelemetry to generate, collect, and access telemetry across Google Cloud. Our commitment to OpenTelemetry extends across all telemetry types — traces, metrics, and logs — and is a cornerstone of our strategy to simplify telemetry management and foster an open cloud environment. We understand that in today's complex cloud environments, managing telemetry data across disparate systems, inconsistent data formats, and vast volumes of information can lead to observability gaps and increased operational overhead. We are dedicated to streamlining your telemetry pipeline, starting with focusing on native OTLP ingestion for all telemetry types so you can seamlessly send your data to Google Cloud Observability. This will help foster true vendor neutrality and interoperability, eliminating the need for complex conversions or vendor-specific agents. Beyond seamless ingestion, we're also building capabilities for managed server-side processing, flexible routing to various destinations, and unified management and control over your telemetry across environments. This will further our observability experience with advanced processing and routing capabilities all in one place. The introduction of OTLP trace ingestion with telemetry.googleapis.com is a significant first step in this journey. We're continually working to expand our OpenTelemetry support across all telemetry types with additional processing and routing capabilities to provide you with a unified and streamlined observability experience on Google Cloud. Get started today We encourage you to begin using telemetry.googleapis.com for your trace data by following this migration guide. This new endpoint offers enhanced capabilities, including higher storage limits and an improved user experience within Cloud Trace Explorer and Observability Analytics.

Images. Text. Audio. There’s no modality that is not handled by AI. And AI systems reach even further, planning advertisement and marketing campaigns, automating social media postings, … Most of this was unthinkable a mere ten years ago. But then, the first machine learning-driven algorithms did their initial steps: out of the research labs, into […] The post If we use AI to do our work – what is our job, then? appeared first on Towards Data Science.

Apple’s new smartwatches have the spotlight this week, but Android users have a reason to celebrate, too. That’s because Samsung’s gorgeous, Bluetooth-enabled Galaxy Watch 8 Classic is cheaper than ever at Woot through September 19th. It’s down to $359.99 ($140 off), nearly matching the price of the $349.99 Galaxy Watch 8. Not a bad discount […]