Archives AI News

Cronos: The New Dawn Launch Trailer

🚀 How Startups and Universities Spark Innovation

Target Promo Codes and Deals: Up to 50% Off

Enjoy $50 off your next order, 20% off sitewide, and up to 50% off with Target coupon codes and deals available this month.

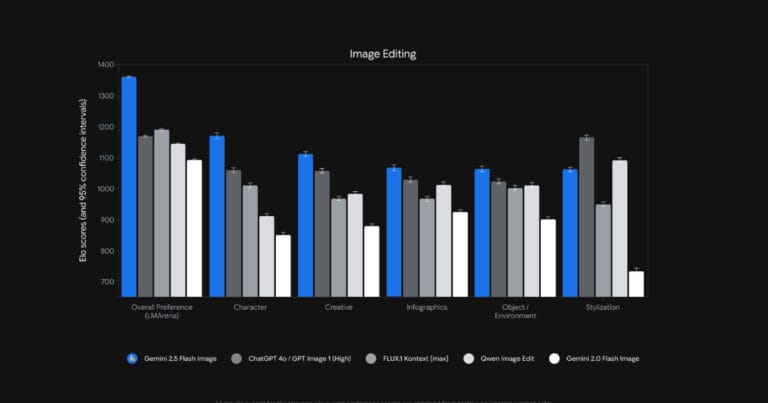

Google Launches Gemini 2.5 Flash Image with Advanced Editing and Consistency Features

Google released Gemini 2.5 Flash Image (nicknamed nano-banana), its newest image generation and editing model. The system introduces several upgrades over earlier Flash models, including character consistency across prompts, multi-image fusion, precise prompt-based editing, and integration of world knowledge for semantic understanding. By Robert Krzaczyński

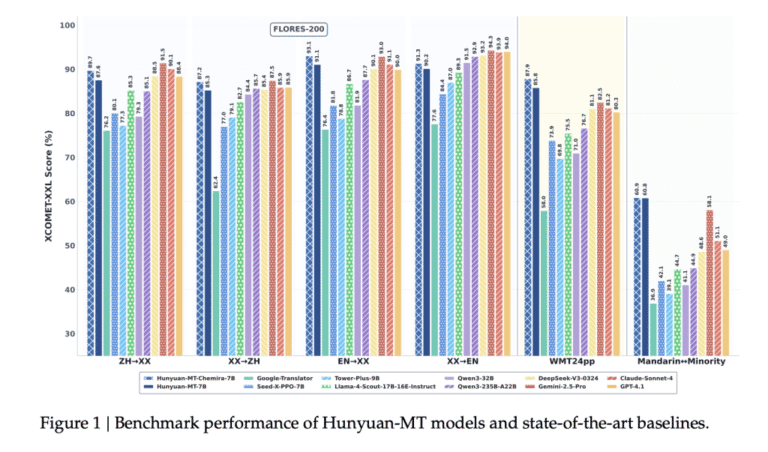

Tencent Hunyuan Open-Sources Hunyuan-MT-7B and Hunyuan-MT-Chimera-7B: A State-of-the-Art Multilingual Translation Models

Introduction Tencent’s Hunyuan team has released Hunyuan-MT-7B (a translation model) and Hunyuan-MT-Chimera-7B (an ensemble model). Both models are designed specifically for multilingual machine translation and were introduced in conjunction with Tencent’s participation in the WMT2025 General Machine Translation shared task, where Hunyuan-MT-7B ranked first in 30 out of 31 language pairs. Model Overview Hunyuan-MT-7B Hunyuan-MT-Chimera-7B […] The post Tencent Hunyuan Open-Sources Hunyuan-MT-7B and Hunyuan-MT-Chimera-7B: A State-of-the-Art Multilingual Translation Models appeared first on MarkTechPost.

A Deep Dive into RabbitMQ & Python’s Celery: How to Optimise Your Queues

Key lessons I’ve learned running RabbitMQ + Celery in production The post A Deep Dive into RabbitMQ & Python’s Celery: How to Optimise Your Queues appeared first on Towards Data Science.

Yet Unnoticed in LSTM: Binary Tree Based Input Reordering, Weight Regularization, and Gate Nonlinearization

arXiv:2509.00087v1 Announce Type: new Abstract: LSTM models used in current Machine Learning literature and applications, has a promising solution for permitting long term information using gating mechanisms that forget and reduce effect of current input information. However, even with this pipeline, they do not optimally focus on specific old index or long-term information. This paper elaborates upon input reordering approaches to prioritize certain input indices. Moreover, no LSTM based approach is found in the literature that examines weight normalization while choosing the right weight and exponent of Lp norms through main supervised loss function. In this paper, we find out which norm best finds relationship between weights to either smooth or sparsify them. Lastly, gates, as weighted representations of inputs and states, which control reduction-extent of current input versus previous inputs (~ state), are not nonlinearized enough (through a small FFNN). As analogous to attention mechanisms, gates easily filter current information to bold (emphasize on) past inputs. Nonlinearized gates can more easily tune up to peculiar nonlinearities of specific input in the past. This type of nonlinearization is not proposed in the literature, to the best of author's knowledge. The proposed approaches are implemented and compared with a simple LSTM to understand their performance in text classification tasks. The results show they improve accuracy of LSTM.

Practical and Private Hybrid ML Inference with Fully Homomorphic Encryption

arXiv:2509.01253v1 Announce Type: cross Abstract: In contemporary cloud-based services, protecting users' sensitive data and ensuring the confidentiality of the server's model are critical. Fully homomorphic encryption (FHE) enables inference directly on encrypted inputs, but its practicality is hindered by expensive bootstrapping and inefficient approximations of non-linear activations. We introduce Safhire, a hybrid inference framework that executes linear layers under encryption on the server while offloading non-linearities to the client in plaintext. This design eliminates bootstrapping, supports exact activations, and significantly reduces computation. To safeguard model confidentiality despite client access to intermediate outputs, Safhire applies randomized shuffling, which obfuscates intermediate values and makes it practically impossible to reconstruct the model. To further reduce latency, Safhire incorporates advanced optimizations such as fast ciphertext packing and partial extraction. Evaluations on multiple standard models and datasets show that Safhire achieves 1.5X - 10.5X lower inference latency than Orion, a state-of-the-art baseline, with manageable communication overhead and comparable accuracy, thereby establishing the practicality of hybrid FHE inference.

Learning from Peers: Collaborative Ensemble Adversarial Training

arXiv:2509.00089v1 Announce Type: new Abstract: Ensemble Adversarial Training (EAT) attempts to enhance the robustness of models against adversarial attacks by leveraging multiple models. However, current EAT strategies tend to train the sub-models independently, ignoring the cooperative benefits between sub-models. Through detailed inspections of the process of EAT, we find that that samples with classification disparities between sub-models are close to the decision boundary of ensemble, exerting greater influence on the robustness of ensemble. To this end, we propose a novel yet efficient Collaborative Ensemble Adversarial Training (CEAT), to highlight the cooperative learning among sub-models in the ensemble. To be specific, samples with larger predictive disparities between the sub-models will receive greater attention during the adversarial training of the other sub-models. CEAT leverages the probability disparities to adaptively assign weights to different samples, by incorporating a calibrating distance regularization. Extensive experiments on widely-adopted datasets show that our proposed method achieves the state-of-the-art performance over competitive EAT methods. It is noteworthy that CEAT is model-agnostic, which can be seamlessly adapted into various ensemble methods with flexible applicability.