Warner Bros. sues Midjourney for AI images of Superman, Batman, and other characters

Warner Bros. is suing Midjourney for AI images of Superman, Batman, and other characters.

Warner Bros. is suing Midjourney for AI images of Superman, Batman, and other characters.

The just-fully-announced Legion Go 2 will be the first handheld outside of Asus that’s confirmed to get the new Xbox full-screen experience (FSE). Lenovo spokesperson Jeff Witt tells me buyers will be able to manually switch the handheld to Xbox FSE after it’s ready in the spring 2026 time frame, months after the Legion Go […]

For ten years, Google Kubernetes Engine (GKE) has been at the forefront of innovation, powering everything from microservices to cloud-native AI and edge computing. To honor this special birthday, we’re challenging you to catapult your microservices into the future with cutting-edge agentic AI. Are you ready to celebrate? Build AI Agents on GKE in the GKE Turns 10 Hackathon Why join? Hands-on learning with GKE: This is your shot to build the next evolution of applications by integrating agentic AI capabilities on GKE. We have everything you need to get started: our microservice applications, example agents on GitHub, documentation, quickstarts, tutorials, and a webinar hosted by our experts. Showcase your skills: You’ll have the opportunity to elevate a sample microservices application into a unique use case. Feel free to get creative with non-traditional use cases and utilize Agent Development Kit (ADK), Model Context Protocol (MCP), and the Agent2Agent (A2A) protocol for extra powerful functionality! Think you have what it takes to win? Build an app to showcase your agents and you could potentially win: Overall grand prize: $15,000 in USD, $3,000 in Google Cloud Credits for use with a Cloud Billing Account, A chance to win maximum of two (2) KubeCon North America conference passes in Atlanta, Georgia (November 10-13, 2025), a 1 year, no-cost Google Developer Program Premium subscription, guest interview on the Kubernetes Podcast, video feature with the GKE team, virtual coffee with a Google team member, and social promo Regional winners: $8,000 in USD, $1,000 in Google Cloud Credits for use with a Cloud Billing Account, video feature with the GKE team on a Google Cloud social media channel, virtual coffee with a Google team member, and social promo Honorable mentions: $1000 in USD and $500 in Google Cloud Credits for use with a Cloud Billing Account Unleash the power of agentic AI on GKE GKE is built on open-source Kubernetes, but is also tightly integrated with the Google Cloud ecosystem. This makes it easy to get started with a simple application, while having the control you need for more complex application orchestration and management. When you join the GKE Turns 10 Hackathon, your mission is to take pre-existing microservice applications (either Bank of Anthos or Online Boutique) and then integrate cutting-edge agentic AI capabilities. The goal is not to modify the core application code directly, but instead build new components that interact with its established APIs! Here is some inspiration: Optimize important processes: Add a sophisticated AI chatbot to the Online Boutique that can query inventory, provide personalized product recommendations, or even check a user’s financial balance via an integrated Bank of Anthos API. Streamline maintenance and mitigation: Develop an agent that intelligently monitors microservice performance on GKE, suggests troubleshooting steps, and even automates remediation. Crucial note: Your project must be built using GKE and Google AI models such as Gemini, focusing on how the agents interact with your chosen microservice application. As long as GKE is the foundation, feel free to enhance your project by integrating other Google Cloud technologies! Ready to start building? Head over to our hackathon website and watch our webinar to learn more, review the rules, and register.

We’re excited to announce an expansion to our Compute Flexible Committed Use Discounts (Flex CUDs), providing you with greater flexibility across your cloud environment. Your spend commitments now stretch further and cover a wider array of Google Cloud services and VM families, translating into greater savings for your workloads. Flex CUDs are spend-based commitments that provide deep discounts on Google Cloud compute resources in exchange for a one or three-year term. This model offers maximum flexibility, automatically applying savings across a broad pool of eligible VM families and regions without being tied to a single resource. More power, more savings with expanded coverage We understand that modern applications are built on a diverse mix of services, from massive databases to nimble serverless functions. To better support the way you build, we’re expanding Flex CUDs to cover more of the specialized solutions and serverless solutions you use every day: Memory-optimized VM Families: We’re bringing enhanced discounts to our memory-optimized M1, M2, M3 and the new M4 VM families. Now you can get more value from critical workloads like SAP HANA, in-memory analytics platforms and high-performing databases. High-performance computing (HPC) VM families: For compute-intensive workloads, Flex CUDs now apply to our HPC-optimized H3 and the new H4D VM families, perfect for complex simulations and scientific research. Cloud Run and Cloud Functions: For developers and organizations that use Cloud Run's fully managed platform, we are extending Flex CUDs’ coverage to Cloud Run request-based billing and Cloud Run functions. aside_block <ListValue: [StructValue([('title', '$300 in free credit to try Google Cloud infrastructure'), ('body', <wagtail.rich_text.RichText object at 0x3ebfe80b3130>), ('btn_text', ''), ('href', ''), ('image', None)])]> Why this matters This expansion of Compute Flex CUDs is designed with your growth and efficiency in mind: Maximize your spend commitments: Instead of being tied to a specific resource type or region, your committed spend can now be applied across a larger portion of your Google Cloud usage. This means less "wasted" commitment and more active savings. Enhanced financial predictability and control: With greater coverage, you gain a clearer picture of your anticipated cloud spend, making budgeting and financial planning more predictable. Simplified cost management: A single, flexible commitment can now cover a more diverse set of services, streamlining your financial operations and reducing the complexity of managing multiple, granular commitments. Fuel innovation: By reducing the cost of core compute and serverless services, you free up budget that can be reinvested into innovation. An updated Billing model Compute Flex CUDs’ expanded coverage is made possible by the new and improved spend-based CUDs model, which streamlines how discounts are applied and provides greater flexibility. Enabling this feature triggers some experience changes to the Billing user interface, Cloud Billing export to BigQuery schema, and Cloud Commerce Consumer Procurement API. This new billing model is simpler: we directly charge the discounted rate for CUD-eligible usage, reflecting the applicable discount, instead of using credits to offset usage and reflect savings. It’s also more flexible: we apply discounts to a wider range of products within spend-based CUDs. For more, this follow-up resource details the updates, including information on a sample export to preview your monthly bill in the new format, key CUD KPIs, new SKUs added to CUDs, and CUD product information. You can learn more about these changes in the documentation. Availability and next steps At Google Cloud, we’re committed to providing you with the most flexible and cost-effective solutions for your evolving cloud needs. This expansion of Compute Flex CUDs is a testament to that commitment, enabling you to build, deploy, and scale your applications with even greater financial efficiency. Starting today, you can opt-in and begin enjoying Compute Flex CUDs’ expanded scope and improved billing model. Starting January 21, 2026, all customers will be automatically transitioned to the new spend-based model to take advantage of these expanded Flex CUDs. If you don’t opt in to multi-price CUDs, these changes will be automatically applied on January 21, 2026. New customers who create a Billing Account on or after July 15, 2025 will automatically be under the new billing model for Flex CUDs. Stay tuned for more updates as we continue to enhance our offerings to support your success on Google Cloud.

Apache Spark is a fundamental part of most modern lakehouse architectures, and Google Cloud's Dataproc provides a powerful, fully managed platform for running Spark applications. However, for data engineers and scientists, debugging failures and performance bottlenecks in distributed systems remains a universal challenge. Manually troubleshooting a Spark job requires piecing together clues from disparate sources — driver and executor logs, Spark UI metrics, configuration files and infrastructure monitoring dashboards. What if you had an expert assistant to perform this complex analysis for you in minutes? Today, we are excited to introduce the public preview of Gemini Cloud Assist Investigations for troubleshooting Spark workloads. Available for both Dataproc on Google Compute Engine and Google Cloud Serverless for Apache Spark, Gemini Cloud Assist identifies underlying issues and provides clear, actionable recommendations. Accessible directly in the Google Cloud console — either from the resource page (e.g., Serverless for Apache Spark Batch job list or Batch detail page) you are investigating or from the central Cloud Assist Investigations list — Gemini Cloud Assist offers several powerful capabilities: For data engineers: Fix complex job failures faster. A prioritized list of intelligent summaries and cross-product root cause analyses helps in quickly narrowing down and resolving a problem. For data scientists and ML engineers: Solve performance and environment issues without deep Spark knowledge. Gemini acts as your on-demand infrastructure and Spark expert so you can focus more on models. For Site Reliability Engineers (SREs): Quickly determine if a failure is due to code or infrastructure. Gemini finds the root cause by correlating metrics and logs across different Google Cloud services, thereby reducing the time required to identify the problem. For big data architects and technical managers: Boost team efficiency and platform reliability. Gemini helps new team members contribute faster, describe issues in natural language and easily create support cases. Gemini Cloud Assist is also accessible through a direct API and other interfaces. The inherent challenges of debugging Spark jobs Debugging Spark applications is inherently complex because failures can stem from anywhere in a highly distributed system. These issues generally fall into two categories. First are the outright job failures. Then, there are the more insidious, subtle performance bottlenecks. Additionally, cloud infrastructure issues can cause workload failures, complicating investigations. Gemini Cloud Assist is designed to tackle all these challenges head-on: Problem Area Common Issues How Gemini Cloud Assist can help Infrastructure Problems Permission issues, networking errors, resource exhaustion Gemini Cloud Assist analyzes and correlates a wide range of data, including metrics, configurations, and logs, across Google Cloud services and pinpoints the root cause of infrastructure issues and provides a clear resolution. Configuration Problems Resource under-provisioning, configuration missteps Gemini Cloud Assist automatically identifies incorrect or insufficient Spark and cluster configurations, and recommends the right settings for your workload. Application Problems Application logic related problems, inefficient code and algorithms Gemini Cloud Assist analyzes application logs, Spark metrics, and performance data and diagnoses code errors and performance bottlenecks, and provides actionable recommendations to fix them. Data Problems Stage/Task failures, data-related issues Gemini Cloud Assist analyzes Spark metrics and logs and identifies data-related issues like data skew, and provides actionable recommendations to improve performance and stability. Gemini Cloud Assist: Your AI-powered operational expert Let's explore how Gemini transforms the investigation process in common, real-world scenarios. Example 1: The slow job with performance bottlenecks Some of the most challenging issues are not outright failures but performance bottlenecks. A job that runs slowly can impact service-level objectives (SLOs) and increase costs, but without error logs, diagnosing the cause requires deep Spark expertise. Say a critical batch job succeeds but takes much longer than expected. There are no failure messages, just poor performance. Manual investigation requires a deep-dive analysis in the Spark UI. You would need to manually search for "straggler" tasks that are slowing down the job. The process also involves analyzing multiple task-level metrics to find signs of memory pressure or data skew. With Gemini assistance By clicking Investigate, Gemini automatically performs this complex analysis of performance metrics, presenting a summary of the bottleneck. Gemini acts as an on-demand performance expert, augmenting a developer's workflow and empowering them to tune workloads without needing to be a Spark internals specialist. Example 2: The silent infrastructure failure Sometimes, a Spark job or cluster fails due to issues in the underlying cloud infrastructure or integrated services. These problems are difficult to debug because the root cause is often not in the application logs but in a single, obscure log line from the underlying platform. Say a cluster configured to use GPUs fails unexpectedly. The manual investigation begins by checking the cluster logs for application errors. If no errors are found, the next step is to investigate other Google Cloud services. This involves searching Cloud Audit Logs and monitoring dashboards for platform issues, like exceeded resource quotas. With Gemini assistance A single click on the Investigate button triggers a cross-product analysis that looks beyond the cluster's logs. Gemini quickly pinpoints the true root cause, such as an exhausted resource quota, and provides mitigation steps. Gemini bridges the gap between the application and the platform, saving hours of broad, multi-service investigation. Get started today! Spend less time debugging and more time building and innovating. Let Gemini Cloud Assist in Dataproc on Compute Engine and Google Cloud Serverless for Apache Spark be your expert assistant for big data operations. Get Gemini Cloud Assist today! Learn more about Gemini Cloud Assist in Dataproc on Compute Engine and Google Cloud Serverless for Apache Spark.

Learn how to get AI insights in one easy step in Google Sheets.

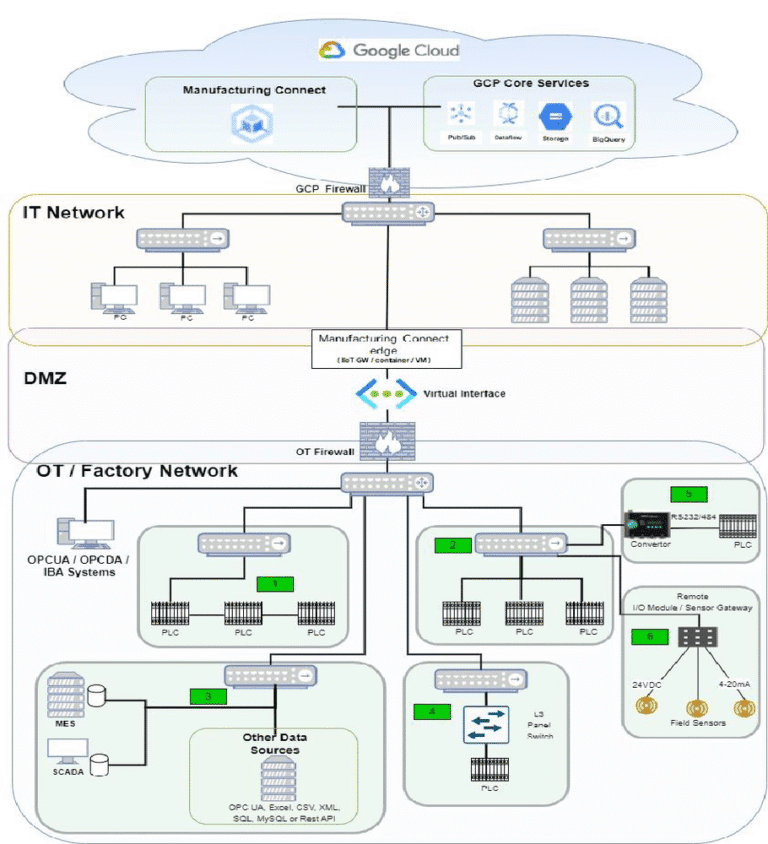

Tata Steel is one of the world’s largest steel producers, with an annual crude steel capacity exceeding 35 millions tons. With such a large and global output, we needed a way to improve asset availability, product quality, operational safety, and environmental monitoring. By centralizing data from diverse sources and implementing advanced analytics with Google Cloud, we're driving a more proactive and comprehensive approach to worker safety and environmental stewardship. To achieve these objectives, we designed and implemented a robust multi-cloud architecture. This setup unifies manufacturing data across various platforms, establishing the Tata Steel Data Lake on Google Cloud as the centralized repository for seamless data aggregation and analytics. High level IIOT data integration architecture Building a unified data foundation on Google Cloud Our comprehensive data acquisition framework spans multiple plant locations, including Jamshedpur, in the eastern Indian state of Jharkhand, where we leverage Litmus and ClearBlade — both available on Google Cloud Marketplace — to collect real-time telemetry data from programmable logic controllers (PLCs) via LAN, SIM cards, and process networks. As alternatives, we employ an internal data staging setup using SAP Business Objective Data Services (BODS) and Web APIs. We have also developed in-house smart sensors that use LoRaWAN and Web APIs to upstage data. These diverse approaches ensure seamless integration of both Operational Technology (OT) data from PLCs and Information Technology (IT) data from SAP into Google Cloud BigQuery, enabling unified and efficient data consumption. Initially, Google Cloud IoT Core was used for ingesting crane data. Following its deprecation, we redesigned the data pipeline to integrate ClearBlade IoT Services, ensuring seamless and secure data ingestion into Google Cloud. Our OT Data Lake is architected on Manufacturing Data Engine (MDE) and BigQuery, which provides decoupled storage and compute capabilities for scalable, cost-efficient data processing. We developed a visualization layer with hourly and daily table partitioning to support both real-time insights and long-term trend analysis, strategically archiving older datasets in Google Cloud Storage for cost optimization. We also implemented a secure, multi-path data ingestion architecture to upstage OT data with minimal latency, utilizing Litmus and ClearBlade IoT Core. Finally, we developed custom solutions to extract OPC Data Access and OPC Unified Access data from remote OPC servers, staging it through on-premise databases before secure transfer to Google Cloud. Together, this comprehensive architecture provides immediate access to real-time device data while facilitating batch processing of information from SAP and other on-premise databases. This integrated approach to OT and IT data delivers a holistic view of operations, enabling more informed decision-making for critical initiatives like Asset Health Monitoring, Environment Canvas, and the Central Quality Management System, across all Tata Steel locations. Crane health monitoring with IoT data Monitoring health parameters of crane sub devices Overcoming legacy challenges for real-time operations Before deploying Industrial IoT with Google Cloud, high-velocity data was not readily accessible in our central storage. Instead, the data resided in local systems, such as mediation servers and IBA, where limited storage capacity led to automatic purging after a defined retention period. This approach, combined with legacy infrastructure, significantly constrained data availability and hindered informed business decision-making. Furthermore, edge analytics and visualization capabilities were limited, and data latency remained high due to processing bottlenecks at the mediation layer. Addressing these issues, particularly implementing a secure OT data pipeline within a DMZ environment, posed significant challenges. To mitigate cybersecurity risks and maintain data integrity, we designed multiple architectural data paths that incorporate one-way data transfer mechanisms (data diodes) to ensure the secure and controlled upstaging of OT data to the cloud. Our Google Cloud implementation has since enabled the seamless acquisition of high-volume and high-velocity data for analyzing manufacturing assets and processes, all while ensuring compliance with security protocols across both the IT and OT layers. This initiative has enhanced operational efficiency and delivered cost savings. Our collaboration with Google Cloud to evaluate and implement secure, more resilient manufacturing operations solutions marks a key milestone in Tata Steel’s digital transformation journey. The new unified data foundation empowered data-driven decision-making through AI-enabled capabilities, including: Asset health monitoring Event-based alerting mechanisms Real-time data monitoring Advanced data analytics for enhanced user experience The iMEC: Powering predictive maintenance and efficiency Tata Steel's Integrated Maintenance Excellence Centre (iMEC) utilizes MDE to build and deploy monitoring solutions. This involves leveraging data analytics, predictive maintenance strategies, and real-time monitoring to enhance equipment reliability and enable proactive asset management. MDE, which provides a zero code pre-configured set of Google Cloud infrastructure, acts as a central hub for ingesting, processing, and analyzing data from various sensors and systems across the steel plant, enabling the development and implementation of solutions for improved operational efficiency and reduced downtime. With monitoring solutions helping to deliver real-time advice, maintenance teams can reduce the physical human footprint at hazardous shop floor locations while providing more ergonomic and comfortable working environments to employees compared to near-location control rooms. These solutions also help us centralize asset management and maintenance expertise, employing real-time data to enable significant operational improvements and cost-effectiveness goals, including: Reducing unplanned outages and increasing equipment availability. Transitioning from Time-Based Maintenance (TBM) to predictive maintenance. Optimizing resource use, reducing power costs, and minimizing delays. Driving safety with video analytics and cloud storage To strengthen worker safety, we have also deployed a safety violation monitoring system powered by on-premise, in-house video analytics. Detected violation images are automatically uploaded to a Cloud Storage bucket for further analysis and reporting. We developed and trained a video analytics model in-house, using specific samples of violations and non-violations tailored to each use case. This innovative approach has enabled us to efficiently store a growing catalog of safety violation images on Cloud Storage, harnessing its elastic storage capabilities. Our Central Quality Management System — which ensures our data is complete, accurate, consistent, and reliable — is also built on Google Cloud, utilizing BigQuery for scalable data storage and analysis, and Looker Studio for intuitive data visualization and reporting. Google Cloud for environmental monitoring Tata Steel’s commitment to sustainability is evident in our comprehensive environment monitoring system, which operates entirely on the Google Cloud. Our Environment Canvas system captures a wide array of environmental Key Performance Indicators (KPIs), including stack emission and fugitive emission. Environment Canvas – Data office & visualization architecture Environmental parameters We capture the data for these KPIs through sensors, SAP, and manual entries. While some sensor data from certain plants is initially sent to a different cloud or on-premises systems, we eventually transfer it to Google Cloud for unified consumption and visualization. By leveraging the power of Google Cloud’s data and AI technologies, we are advancing operational monitoring and safety through a unified data foundation, real-time monitoring, and predictive maintenance — all enabled by iMEC. At the same time, we are reinforcing our commitment to environmental responsibility with a Google Cloud-based system that enables comprehensive monitoring and real-time reporting of environmental KPIs, delivering actionable insights for responsible operations. Learn more about how BigQuery and the Manufacturing Data Engine can help your organization achieve your business goals.

Building an app is exciting - but sharing it is where the real value kicks in. Back when Heroku offered a free tier, deploying demos was effortless. Those days are gone, and finding a simple, free way to showcase machine learning apps has become harder. That’s where Hugging Face Spaces comes in. In this post, I’ll show you how to take a small Streamlit app that visualizes stock financials and deploy it step-by-step to Hugging Face Spaces, making it live and shareable with just a few clicks. The post Showcasing Your Work on HuggingFace Spaces appeared first on Towards Data Science.

If you want to stay alert while running or biking, the Nothing Ear Open are a worthwhile alternative to classic in-ear cans. That’s because the company’s first pair of open-style earbuds — which are now on sale at Nothing’s online storefront or Amazon for an all-time low $99 ($50 off) with a Prime membership — let […]