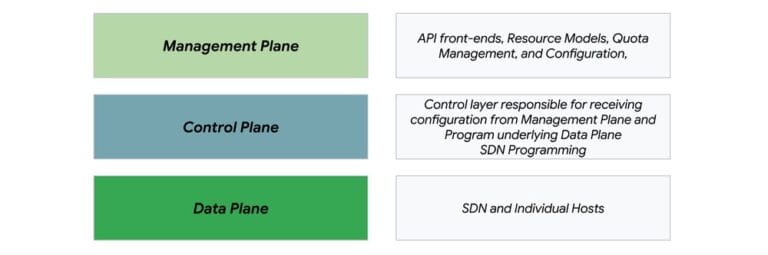

For large enterprises adopting a cloud platform, managing network connectivity across VPCs, on-premises data centers, and other clouds is critical. However, traditional models often lack scalability and increase management overhead. Google Cloud's Network Connectivity Center is a compelling alternative. As a centralized hub-and-spoke service for connecting and managing network resources, Network Connectivity Center offers a scalable and resilient network foundation. In this post, we explore Network Connectivity Center's architecture, availability model, and design principles, highlighting its value and design considerations for maximizing resilience and minimizing the "blast radius" of issues. Armed with this information, you’ll be better able to evaluate how Network Connectivity Center fits within your organization, and to get started. The challenges of large-scale enterprise networks Large-scale VPC networks consistently face three core challenges: scalability, complexity, and the need for centralized management. Network Connectivity Center is engineered specifically to address these pain points head-on, thanks to: Massively scalable connectivity: Scale far beyond traditional limits and VPC Peering quotas. Network Connectivity Center supports up to 250 VPC spokes per hub and millions of VMs, while enhanced cross-cloud connectivity upcoming features like firewall insertion will help ensure your network is prepared for future demands. Smooth workload mobility and service networking: Easily migrate workloads between VPCs. Network Connectivity Center natively solves transitivity challenges through features like producer VPC spoke integration to support private service access (PSA) and Private Service Connect (PSC) propagation, streamlining service sharing across your organization. Reduced operational overhead: Network Connectivity Center offers a single control point for VPC and on-premises connections, automating full-mesh connectivity between spokes to dramatically reduce operational burdens. Under the hood: Architected for resilience Let’s home in on how Network Connectivity Center stays resilient. A key part of that is its architecture, which is built on three distinct, decoupled planes. A very simplified view of the Network Connectivity Center & Google Cloud networking stack Management plane: This is your interaction layer — the APIs, gcloud commands, and Google Cloud console actions you use to configure your network. It's where you create hubs, attach spokes, and manage settings. Control plane: This is the brains of the operation. It takes your configuration from the management plane and programs the underlying network. It’s a distributed, sharded system responsible for the software-defined networking (SDN) that makes everything work. Data plane: This is where your actual traffic flows. It's the collection of network hardware and individual hosts that move packets based on the instructions programmed by the control plane. A core principle that Network Connectivity Center uses across this architecture is fail-static behavior. This means that if a higher-level plane (like the management or control plane) experiences an issue, the planes below it continue to operate based on the last known good configuration, and existing traffic flows are preserved. This helps ensure that, say, a control plane issue doesn't bring down your entire network. aside_block <ListValue: [StructValue([('title', '$300 to try Google Cloud networking'), ('body', <wagtail.rich_text.RichText object at 0x3e00d39b7430>), ('btn_text', ''), ('href', ''), ('image', None)])]> How Network Connectivity Center handles failures A network's strength is revealed by how it behaves under pressure. Network Connectivity Center's design is fundamentally geared towards stability, so that potential issues are contained and their impact is minimized. Consider the following Network Connectivity Center design points: Contained infrastructure impact: An underlying infrastructure issue such as a regional outage only affects resources within that specific scope. Because Network Connectivity Center hubs are global resources, a single regional failure won't bring down your entire network hub. Connectivity between all other unaffected spokes remains intact. Isolated configuration faults: We intentionally limit the "blast radius" of a configuration error with careful fault isolation. A mistake made on one spoke or hub is isolated and will not cascade to cause failures in other parts of your network. This fault isolation is a crucial advantage over intricate VPC peering topologies, where a single routing misconfiguration can have far-reaching consequences. Uninterrupted data flows: The fail-static principle ensures that existing data flows are highly insulated from management or control plane disruptions. In the event of a failure, the network continues to forward traffic based on the last successfully programmed state, maintaining stability and continuity for your applications. Managing the blast radius of configuration changes Even if an infrastructure outage affects resources in its scope, Network Connectivity Center connectivity in other zones or regions remains functional. Critically, Network Connectivity Center configuration errors are isolated to the specific hub or spoke being changed and don’t cascade to unrelated parts of the network — a key advantage over complex VPC peering approaches. To further enhance stability and operational efficiency, we also streamlined configuration management in Network Connectivity Center. Updates are handled dynamically by the underlying SDN, eliminating the need for traditional maintenance windows for configuration changes. Changes are applied transparently at the API level and are designed to be backward-compatible, for smooth and non-disruptive network evolution. Connecting multiple regional hubs Network Connectivity Center hub is a global resource. A multi-region resilient design may involve regional deployments with a dedicated hub per region. This requires connectivity across multiple hubs. Though Network Connectivity Center does not offer native hub-to-hub connectivity, alternative methods allow communication across Network Connectivity Center hubs, fulfilling specific controlled-access needs: Cloud VPN or Cloud Interconnect: Use dedicated HA VPN tunnels or VLAN attachments to connect Network Connectivity Center hubs. Private Service Connect (PSC): Leverage a producer/consumer model with PSC to provide controlled, service-specific access across Network Connectivity Center hubs. Multi-NIC VMs: Route traffic between Network Connectivity Center hubs using VMs with network interfaces in spokes of different hubs. Full-mesh VPC Peering: For specific use cases like database synchronization, establish peering between spokes of different Network Connectivity Center hubs. Frequently asked questions What happens to traffic if the Network Connectivity Center control plane fails?Due to the fail-static design, existing data flows continue to function based on the last known successful configuration. Dynamic routing updates will stop, but existing routes remain active. Does adding a new VPC spoke impact existing connections?No. When a new spoke is added, the process is dynamic and existing data flows should not be interrupted. Is there a performance penalty for traffic traversing between VPCs via Network Connectivity Center? No. Traffic between VPCs connected by Network Connectivity Center will experience the same performance compared to VPC peering. Best practices for resilience While Network Connectivity Center is a powerful and resilient platform, designing a network for maximum availability requires careful planning on your part. Consider the following best practices: Leverage redundancy: Data plane availability is localized. To survive a localized infrastructure failure, be sure to deploy critical applications across multiple zones and regions. Plan your topology carefully: Choosing your hub topology is a critical design decision. A single global hub offers operational simplicity and is the preferred approach for most use cases. Consider multiple regional hubs only if strict regional isolation or minimizing control plane blast radius is a primary requirement, and be aware of the added complexity. Finally, even though they are regional, Network Connectivity Center hubs are still "global resources" — that means in the event of global outages, the management plane operations may be impacted independent of regional availability. Choose Network Connectivity Center for transitive connectivity: For large-scale networks that require transitive connectivity for shared services, choosing Network Connectivity Center over traditional VPC peering can simplify operations and allow you to leverage features like PSC/PSA propagation. Embrace infrastructure-as-code: Use tools like Terraform to manage your Network Connectivity Center configuration, which reduces the risk of manual errors and makes your network deployments repeatable and reliable. Monitor network health: Regularly use the Google Cloud Service Health dashboard and your Personalized Service Health dashboard to stay informed about the status of Network Connectivity Center and other services. Plan for scale: Be aware of Network Connectivity Center's high, but finite, scale limits (e.g., 250 VPC spokes per hub) and plan your network growth accordingly. A simple approach to scalable, resilient networking Network Connectivity Center removes much of the complexity from enterprise networking, providing a simple, scalable and resilient foundation for your organization. By understanding its layered architecture, fail-static behavior, and design principles, you can build a network that not only meets your needs today but is ready for the challenges of tomorrow. To get started, review the design considerations and Network Connectivity Center official documentation or contact Google Cloud teams for guidance on complex, multi-hub network designs.